This site hasn’t been updated since 2012. You can still peruse who I was back then, but know that much of what I think, feel, write, and do has changed. I still occassionally take on interesting projects/clients, so feel free to reach out if that’s what brought you here. — Nishant

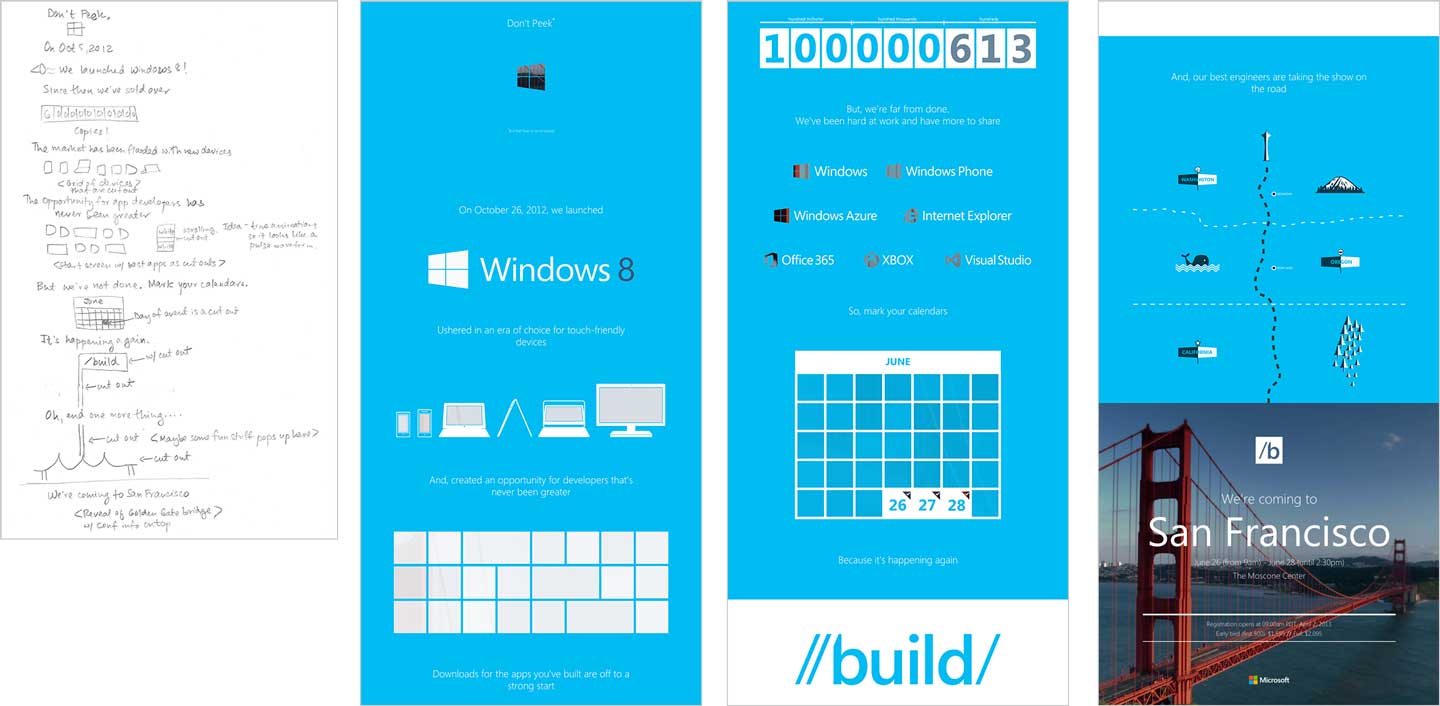

I recently left Microsoft to start a company named Minky with my wife, Pita. We never planned on doing client work but when my ex-manager and champion of good things at Microsoft, Jeff Sandquist, invited me to come on board to help design the Build 2013 experience, I, or should I say, Minky, couldn't resist. Having collaborated on the creative for the 2012 conference with the 3 amigos and Jeff, I was excited at the prospect of helping evolve the Build brand.

Yesterday marked the launch of a simple, one-page announcement site for Build 2013 that Minky helped design and build in approximately a week. The concept relied on faking masks in the browser to reveal parts of an underlying image as the user scrolled. With weak browser support for masks, the task proved to be particularly tricky to implement responsively across the target browser stack because of known sub-pixel rendering issues using percentages in Webkit browsers (let's save that for another day). All in all, despite the apparent simplicity of the design, it turned out to be terrifying to pull off.

What you might not have guessed, though, is that most of my fear was grounded not in my ability to actually implement the design successfully, rather my ability to implement it while hitting the invisible but omnipresent bar of perfection — the eternally moving target characterized by an elusive set of front-end development best practices and techniques — we set for ourselves as web designers.

So, let's talk about perfection.

Frank Chimero recently tweeted —

My favorite feature of the web is View Source.

— Frank Chimero (@fchimero) March 21, 2013

Indeed, I couldn't agree more. But there's a downside captured by another tweet by a friend, Susan Robertson —

As I'm doing this responsive design I worry I'm doing it wrong and anyone who looks at the source will laugh - this stuff is hard.

— Susan Robertson (@susanjrobertson) June 27, 2012

For front-end web developers, the sentiment captured by her tweet and the typically resulting sensation of uneasiness should be a familiar one. The one very unique aspect of front-end web development as compared to the rest of software development is that the code you write is literally one right-click away from anyone who wants to see it (and let's face it, that's the first thing we do to each other's sites).

While we take much pride in the craft of our professions, it causes a level of anxiety similar to what many of us feel on stage while hundreds of pairs of eyes are staring at us. Susan Cain in her book, Quiet: The Power of Introverts in a World that Can't Stop Talking —

One theory, based on the writings of the sociobiologist E. O. Wilson, holds that when our ancestors lived on the savannah, being watched intently meant only one thing: a wild animal was stalking us. And when we think we're about to be eaten, do we stand tall and hold forth confidently? No. We run.

The thought of peers viewing our source causes our reptilian brain to voice a tiny question that inevitably turns into a shrieking echo by the time we approach the finish line —

Will my code hit the bar of perfection?

Dustin Curtis' piece captures our innate, often bordering insane, tendency to seek the best, i.e. the perfect. We've all felt that way about something or someone at one point or the other in our lives. To me (I write this right after my recent two week eastern European vacation), perfection is like that first bite of Sachertorte served to you in a café perched on a cliff with a breathtaking view of Wolfgangsee. But, I know that not everyone shares my view. Certainly not my wife. She'd much prefer some Apple Strudel. And, to even approach perfection it'd need to be served with some vanilla ice cream and a Melange. Really, if you were to press her, she'd admit that the final ingredients would also involve a soft, freshly laundered blanket and a Weimaraner to cuddle with.

We've made peace at some level with the notion that our tastes are subjective, a topic that I've written about both here and here in the past. Tastes differ from person to person. And we acknowledge that when it comes to tastes, it isn't a matter of absolute wrong or right, rather of what's right for me. Seemingly conflicting tastes can thus co-exist in a world where even as we mock each others' tastes, often tastelessly, we strive to accept, as Jakob Von Uexküll infamously dubbed it, each others' umwelts.

But when the conversation turns to anything that could be remotely be considered through an objective lens, or more accurately, as having the possibility of one right answer — beauty, logic, mathematics, politics, religion, music, and literally everything in the world including our beloved front-end web development practices actually fits this bill — all bets are off, we say. As irony would have it, for anything you that you think may be judged only subjectively, there are usually at least two people who believe the opposite: that objective reasoning may be applied to that context to reap the only right, i.e. perfect, answer. And all of a sudden, we're not talking about tastes or sophisticated choices anymore, but facts — cold, hard, statistical, empirically reproducible laws of nature — and we're ready to battle it out with theorems, axioms, rules, laws, and best practices.

But could it be that even the facts we embrace and the sciences we worship often need to be measured, much like taste, in relatives rather than absolutes? Could it be that our facts, in the right light, much like our tastes, are imperfect?

Back in the early 1900's, there was a mathematician by the name of Bertrand Russell best known for having discovered a mathematical paradox. Russell's Paradox is best explained by an excerpt from Fermat's Enigma: The Epic Quest to Solve the World's Greatest Mathematical Problem by Simon Singh:

Russell's paradox is often explained using the tale of the meticulous librarian. One day, while wandering between the shelves, the librarian discovers a collection of catalogues. There are separate catalogues for novels, reference, poetry, and so on. The librarian notices that some of the catalogues list themselves, while others do not.

In order to simplify the system the librarian makes two more catalogues, one of which lists all the catalogues which do list themselves and, more interestingly, one which lists all the catalogues which do not list themselves. Upon completing the task the librarian has a problem: should the catalogue which lists all the catalogues which do not list themselves, be listed in itself? If it is listed, then by definition, it should not be listed. However, if it is not listed, then by definition it should be listed. The librarian is in a no-win situation.

Russell's paradox lay the groundwork for publication of Kurt Gödel's Theorems of Undecidability in 1931. Gödel's theorems proved without doubt that Mathematics as it existed then (and today) could never be logically perfect. Until then (and even in many circles today) Mathematics was heralded as the only logically consistent science there was — the only surety, or as it were, "perfection", in a world full of subjective flip-flopping. But Gödel's theorems pretty much butchered that sacred cow.

The fact that mathematics has massive holes at an axiomatic level is hardly astonishing, though. After all, the history of not just maths, but all sciences is rampant with examples of error, gaps, contradictions, and embarrassing gaffes. Pluto recently losing its planetary status, the recent financial crisis exposing the fragility of the rational model of economics, the use of lobotomies to cure psychological illnesses, the absence of the zero in mathematics for centuries, doctors prescribing low-fat/high-carb diets to diabetic baby boomers, the hypothesis that prevailed for centuries that the earth was flat: each generation provides ripe material to the standup comedy amateur night that is our collective scientific stage.

This is not to say that all things in the universe are arbitrary, that there are no truisms, or that everything that is right today will actually be proven wrong in the future. Rather, it is our ability to identify truisms accurately that we must question constantly, not just for topics entirely unfamiliar to us like mathematical axioms, but for the very topics that are most familiar to us.

Indeed, and we know this deep down, our fallibility is most tested and exposed when we're in our comfort zones.

There are a tremendous number of patterns and practices that we employ today as web designers and developers that we collectively saw as absolutely wrong a few years ago and will likely see as wrong at some point in the near future. Or, as several characters repeated in Battlestar Galactica, "All of this has happened before and all of it will happen again."

Take the phenomenon in the golden age of Flash where "immersive experiences" took over the browser viewport and presented users with "highly interactive" (but generally unintuitive) experiences where straightforward ones were really best suited for the job. Ask anyone who "did Flash" in the early 2000's and they'll tell you that we're back in those days, but this time with HTML5. An issue I took with the introduction of the redundant <article> and <section> tags a few years ago, a torch first carried by our very own defender of that which is sensible, Jeremy Keith, is another case in point that brings back memories of cyclical contradictions in the ongoing standardization processes. The flip-flopping around the use of vendor prefixes to utilize proprietary browser features, many of which were/are not only non-normative but far from being introduced for standardization, is another outrageous case in point and repetition of history. The "works best in Chrome" phenomenon, the comeback of the formerly frowned upon practice of using divs for marking up our grids, the cyclical CSS architecture debate, ... — "All of this has happened before and all of it will happen again." Heck, even the social issues in our industry continue to play like a broken record.

I suspect Russell, Gödel, and our favorite Galactica characters would all agree on one thing if they were in our industry: front-end web development and aspirations of code perfection are fundamentally incompatible. While it takes hundreds of years for Mathematics, unarguably the most logical field in the world even if not perfect, to change at a fundamental level, the Web counts in months, even days at times. And when it comes to the profession of a web developer, perfection of the craft itself is so much of a moving target that bending over backwards to write the most elegant code to solve a problem or trying to keep up with the latest best practices, while addictive the same way competing in triathlons is addictive, can ultimately be sabotaging, both to the user experience and to the morale of the developer.

But good luck convincing our brains of that, right? My hand is literally twitching to type a rebuttal at this very instant about how a laser focus on perfection of the craft doesn't take away from delivering the best user experience, rather it enables it. But that post has already been written a few thousand times over, so to my dear amygdala, I say: Relax!

Even if the wisdom of Nobel prize winning mathematicians and badass Cylon enigmas don't do much to convince us, we should be able to find some solitude in the infinite wisdom of one of our own, Trent Walton:

I think there’s some value in realizing that most of the world doesn’t give two shits about what we do during the day…it reminds me not to take things too seriously and also not to worry about what anyone else thinks.

Touché, my amigo. Maybe we'll never convince ourselves to stop looking for the princess in the castle. Maybe what we really need to work on is realizing that there is no castle.